Create a free account

Contact SalesPicture this: a safety manager on a high-risk worksite is manually cross-checking 200 contractor documents, chasing expired tickets via email, and trying to remember which subcontractor's white card expires next Thursday.

Now picture a major bank in London where an entire team — a Responsible AI team sitting inside the Chief Data & Analytics Office — exists solely to ensure their AI systems are compliant, auditable, ethical, and fit for purpose. That function is one of the fastest-growing in financial services right now.

These two scenes look nothing alike. But they're dealing with the same underlying challenge — and financial services figured out the answer first.

AI Governance Has Arrived. Here's Why Safety Industries Are Next.

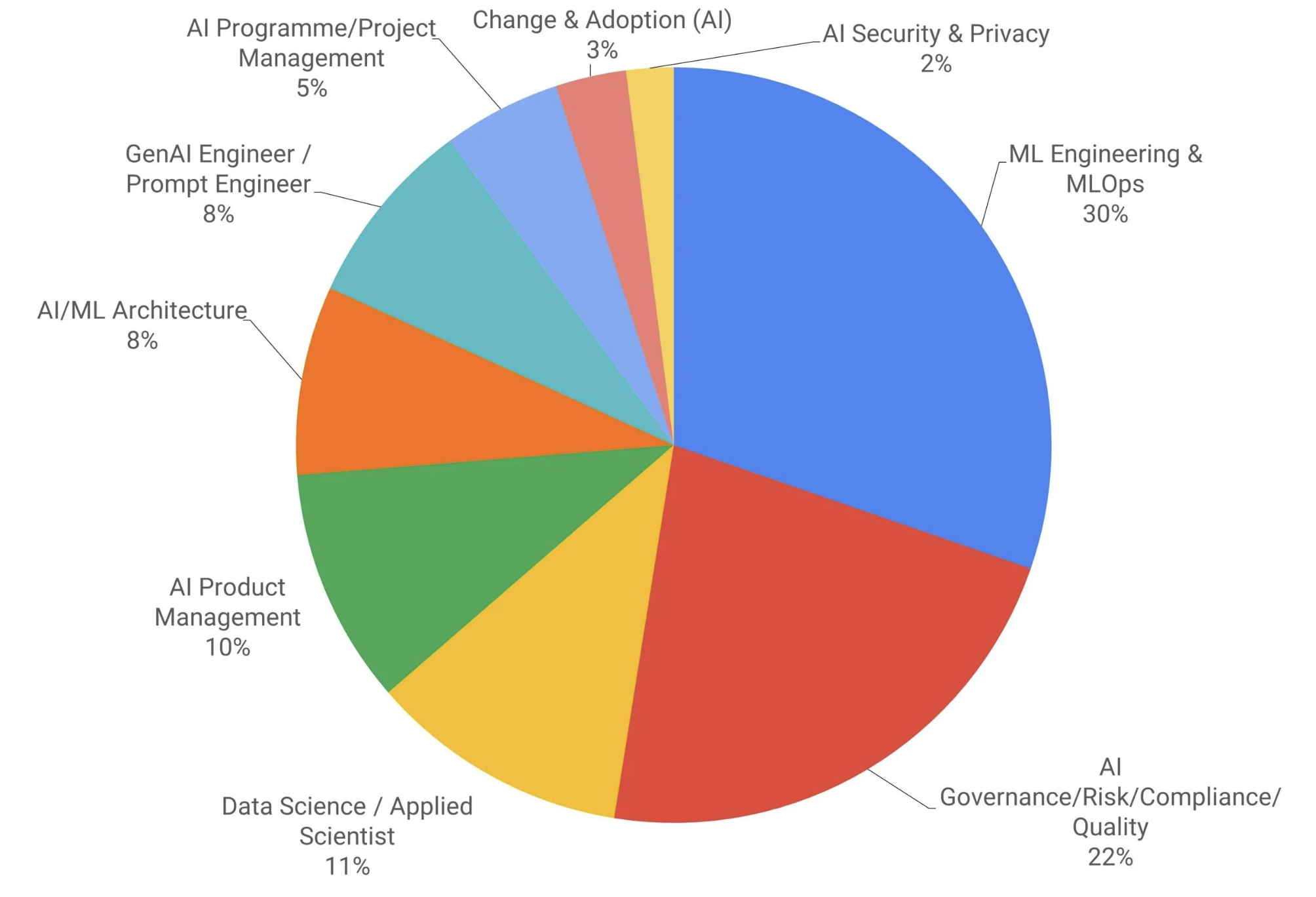

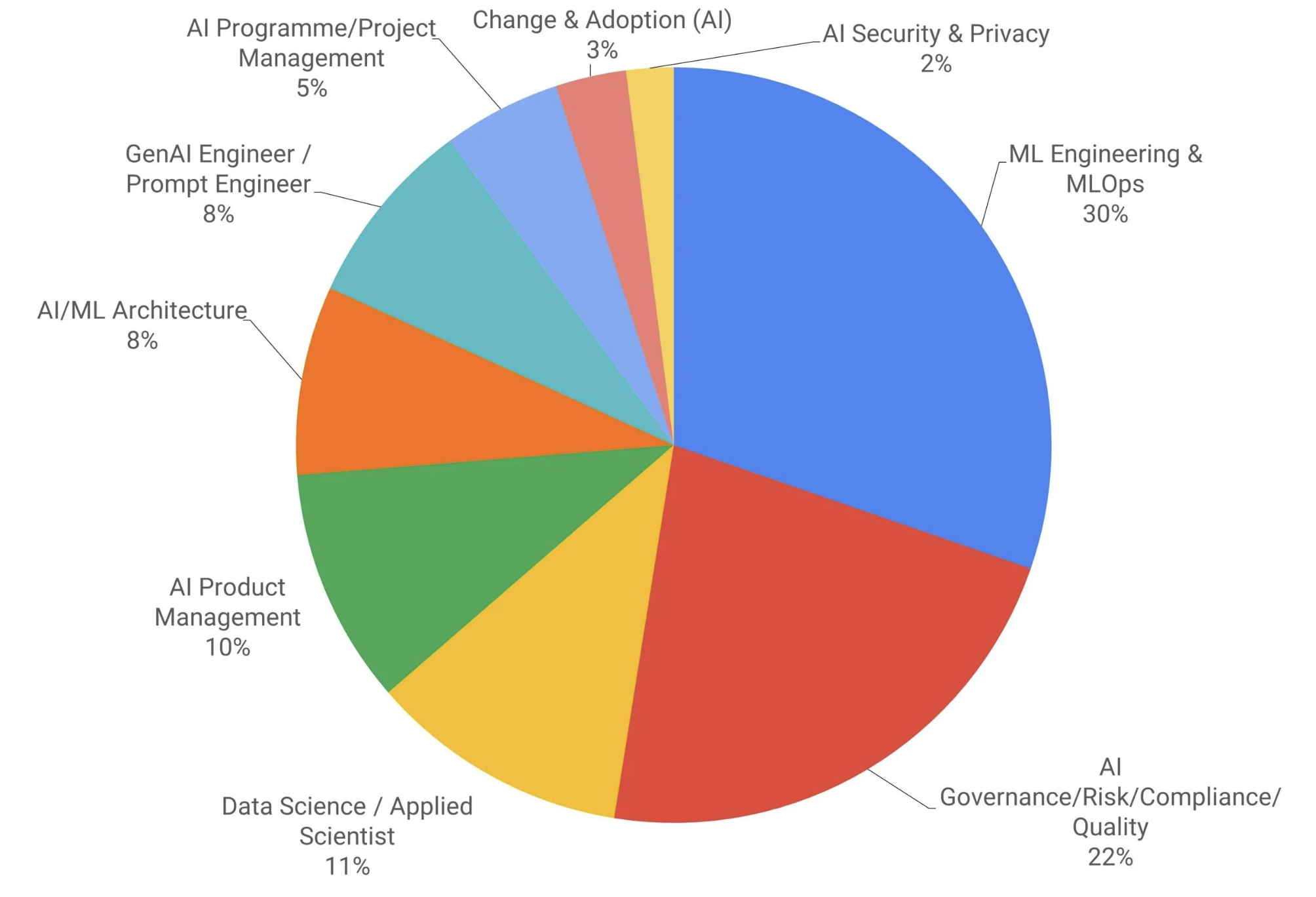

An AI skills report by AI Solutions by equitably.ai, based on analysis of over 120 job postings across major job boards, found that AI Governance, Risk, and Compliance was the fastest-growing AI role category throughout 2025. By early 2026, almost every major UK bank had stood up a dedicated AI governance function — and the trend has since spread well beyond financial services. The EU AI Act, the UK's FCA guidance, and equivalent regulatory pressures in other markets made it non-negotiable: if you're deploying AI, you need a structured framework to govern it.

This didn't happen because those institutions suddenly became altruistic. It happened because regulators made it clear that deploying AI without governance creates legal, financial, and reputational risk that no organisation can afford.

Sound familiar?

Australia's WHS legislation operates on the same logic. The duty of care doesn't disappear because you've automated part of your contractor onboarding process. If anything, it sharpens — because now you're also responsible for ensuring the AI tool is performing as intended, producing auditable outputs, and not creating gaps in your compliance coverage.

The construction, mining, energy, and logistics sectors are heading toward the same regulatory reckoning that heavily regulated industries already went through. The question is whether you want to be ahead of it or scrambling to catch up.

The Parallel Is Closer Than You Think

Let's lay it out directly.

In heavily regulated industries like financial services:

- Regulators (FCA, EU AI Act) require that AI systems used in regulated activities are explainable, auditable, and governed by clear accountability frameworks

- Organisations that deployed AI without governance faced regulatory scrutiny and had to retrofit controls at significant cost

- The firms that built governance first moved faster, with more confidence, and with less remediation risk

In safety-critical industries like construction, mining, and utilities:

- WHS regulators (Safe Work Australia, state WHS authorities) require that duty of care obligations are met regardless of how compliance processes are managed — automated or otherwise

- Organisations that adopt AI tools without clear oversight frameworks risk creating compliance gaps they can't defend in an incident investigation

- The organisations that build structured, auditable AI-assisted compliance processes now will have a meaningful advantage when regulatory scrutiny of AI use in safety contexts inevitably increases

The industries are different. The regulatory logic is identical.

"AI Role Demand by Category" — equitably.ai, Q3 2025 AI Skills Report

That chart tells the story plainly. ML Engineering dominates at 30% — that's the "build the AI" work. But AI Governance/Risk/Compliance/Quality sits at 22% and was the fastest-growing category throughout 2025. The market is not just building AI. It's building the guardrails around it. And in safety-critical industries, those guardrails aren't optional.

What "AI Governance" Actually Looks Like in High-Risk Work Environments

You don't need a Responsible AI Lead with a post-grad in machine learning. What you need is a clear answer to four questions every time AI is involved in a compliance or safety process:

-

Who is accountable when the AI output is wrong? AI-assisted document review, automated induction systems, and AI-flagged compliance gaps are useful tools — but the duty of care sits with a human. Knowing who owns each AI-assisted process and who checks its outputs is the foundation of any governance framework.

-

Can you explain what the AI did, and why? In a post-incident investigation or a regulatory audit, "the system said it was fine" is not an acceptable answer. Your AI tools need to produce traceable, documented outputs that you can walk a regulator through. ComplyFlow's AI-powered document review logs every document review, access decision, and compliance status change — creating the audit trail that governance requires.

-

What are the failure modes, and how do you catch them? Every AI tool has edge cases where it performs poorly. In compliance contexts, an undetected failure can mean a non-compliant contractor on site, an expired certification that slips through, or an induction recorded as complete when it wasn't. Building in human review checkpoints for high-stakes decisions is standard practice in well-governed organisations — and it should be standard in safety management too.

-

Does your AI observe, or does it judge? An AI that tells a contractor their SWMS is "non-compliant" is making a legal determination — one it isn't qualified to make. An AI that flags a criterion as "not identified" is doing something different: it's reporting an observation and handing the judgement to a human. That distinction matters enormously in a regulated context. Well-governed AI in safety-critical industries is built to identify, not to conclude. The human still owns the decision.

What's at Stake for High-Risk Industries

"AI governance matters" is easy to say and easy to ignore. Here's what it actually means in practice — and what's at stake if you get it wrong.

High-risk industries — construction, mining, energy, logistics — share a common challenge: documentation-heavy compliance obligations, large contractor workforces, and regulators who audit frequently and take non-compliance seriously. When AI is helping manage that compliance workload, the governance questions don't go away. They get sharper.

The most common failure mode isn't the AI doing something dramatically wrong. It's the gap between a controlled proof-of-concept and real-world deployment. A tool that performs well on clean, structured documents in a test environment can struggle when faced with the full variance of contractor submissions in the field — scanned photocopies, phone photos with shadows, fillable PDFs, Word documents with broken tables.

Governance means knowing that gap exists, designing for it, and having human review in place for cases the AI can't handle confidently.

When ComplyFlow processes a safety document, it runs two passes: one reads the document as text and grades it against relevant criteria; the second sends the pages visually so the AI can verify checkboxes, signatures, and blank fields that text extraction misses. Each criterion is graded and cited, giving reviewers exactly the information they need. Missing sign-offs or incomplete sections are flagged at submission — before a human opens the file.

But here's the question nobody asks until it's too late: when the AI flagged something as identified and a non-compliant contractor was still on site, can you show a regulator exactly what happened and why?

The answer can't be "the system said it was fine." You need a complete, auditable record of every decision — human or AI-assisted. That's not a nice-to-have. In a post-incident investigation, it's the difference between a defensible position and a very expensive problem.

ComplyFlow: Built for Governed AI Adoption

ComplyFlow has been helping organisations manage contractor compliance and workforce safety since 2009. When we built AI capabilities into the platform, we did it with the same governance logic that other regulated industries have learned the hard way: audit trails matter, human oversight can't be designed out, and compliance tools need to be explainable.

Our AI-powered document review flags issues for human decision — it doesn't make autonomous compliance calls. Our no-code AI agent builder lets safety and operations managers configure automated workflows without losing visibility or control. And every action across the platform is logged, traceable, and audit-ready.

That's not just good product design. It's what responsible AI deployment in safety-critical industries looks like.

The Bottom Line

Other regulated industries didn't get ahead of AI governance because their professionals are more forward-thinking than safety managers. They got ahead because their regulators moved first and they had no choice.

Australia's WHS regulators are catching up. The construction, mining, energy, and logistics sectors that build governed, auditable, AI-assisted compliance processes now — rather than waiting for a regulatory event to force the issue — will be the ones writing the playbook that everyone else follows.

That's a better position to be in than scrambling to retrofit governance after something goes wrong.